X blames users for Grok-generated CSAM; no fixes announced

In a striking turn of events, X has opted not to enhance Grok’s capabilities to prevent the generation of sexualized images of minors. Instead, the platform is focusing on penalizing users who produce content it considers illegal, including child sexual abuse material (CSAM) generated by Grok. Following a week of intense criticism, X Safety finally issued a statement addressing the backlash. Rather than apologizing for Grok’s flaws, X Safety attributed the issue to user behavior, emphasizing that prompts given to Grok can lead to account suspensions and potential legal repercussions. "We take action against illegal content on X, including Child Sexual Abuse Material (CSAM), by removing it, permanently suspending accounts, and collaborating with local authorities and law enforcement as needed," the statement read. Users were warned that they would face the same consequences for prompting Grok to create illegal content as they would for directly uploading it themselves. This announcement sparked further discussion online, particularly after a post from the platform’s owner, Elon Musk, reiterated the potential penalties for inappropriate user prompts. Musk's comments came in response to a user, DogeDesigner, who expressed that blaming Grok for inappropriate images is akin to blaming a pen for something written. "A pen doesn’t decide what gets written. The person holding it does. Grok works the same way. What you get depends a lot on what you put in," the user stated. However, the comparison to a pen is flawed, as AI image generators like Grok are not strictly deterministic—they can produce varied outputs even from identical prompts. This ambiguity is one reason the Copyright Office has refused to grant registration for AI-generated works, citing a lack of human agency in the creative process. Many users have raised concerns about why X is not implementing filters to address the CSAM produced by Grok, highlighting that the platform's response fails to adequately tackle the core issue, placing the onus solely on users instead.

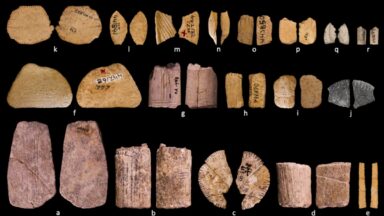

Ancient Dice Unearth Insights into Native American Understanding of Probability

A groundbreaking study reveals that Native Americans have been engaging in games of chance using dice for over 12,000 ye...

Ars Technica | Apr 03, 2026, 23:00

Fizz App Launches in Saudi Arabia, Navigating Cultural and Regulatory Challenges

Fizz, a social app that allows users to post anonymously, has made its international debut in Saudi Arabia, marking a si...

TechCrunch | Apr 03, 2026, 22:50

The Alarming Rise of Cognitive Surrender: Are We Trusting AI Too Much?

Recent findings reveal a troubling trend among users of large language models (LLMs): a significant portion appears will...

Ars Technica | Apr 03, 2026, 21:10

Lucid Group Faces Sales Slump Amid Supplier Challenges

Lucid Group experienced a promising end to 2025, significantly ramping up production by doubling its electric vehicle (E...

TechCrunch | Apr 04, 2026, 01:30

OpenAI's Leadership Transitions Amid CEO's Health Challenges

Fidji Simo, the CEO of applications at OpenAI, has announced that she will be taking an extended medical leave due to a ...

CNBC | Apr 03, 2026, 20:40