OpenAI explains why GPT-5 hallucinates: ‘Language models can mislead’

OpenAI has brought attention to the ongoing challenge of 'hallucinations' in its language models, revealing that even its top-tier systems can produce confidently incorrect information. In a recent blog post, published on September 5, the company defined hallucinations as misleading yet plausible statements generated by AI, which can arise even in response to simple queries. The issue stems from the methods used for training and evaluating these models. OpenAI points out that current benchmarks often prioritize guessing over acknowledging uncertainty, incentivizing AI systems to provide answers rather than admit a lack of knowledge. For instance, an earlier model generated three different incorrect responses when asked for the title of an author’s dissertation and similarly varied answers for a birth date. OpenAI likens this situation to a multiple-choice exam, where a student might guess an answer to avoid leaving a question blank, thus risking a zero. When models are judged solely on accuracy, they are driven to guess rather than to express uncertainty. Fortunately, newer models like GPT-5 have shown a decrease in hallucinations, particularly in tasks requiring reasoning. Research from OpenAI suggests that teaching models to refrain from answering when unsure—an embodiment of AI humility—can lead to lower error rates, even if it might slightly impact their apparent accuracy on traditional benchmarks. The company advocates for a shift in evaluation frameworks, arguing for penalizing confident errors more than abstentions and granting partial credit for uncertainty to better manage the issue of hallucinations. The root cause of hallucinations lies in the pretraining process of language models, which learn by predicting the next word in extensive text datasets, devoid of truth-value labels. While they grasp consistent patterns like grammar and spelling effectively, predicting rare or arbitrary facts, such as someone's birthday, remains a challenge. OpenAI's paper also clarifies misconceptions surrounding hallucinations, emphasizing that while some inquiries are unanswerable, hallucinations can be avoided by smaller models that recognize their limitations. Rather than being a random glitch, hallucinations are a predictable outcome of the statistical training process combined with current evaluation reward structures. The company underscores that reducing hallucination rates is an ongoing pursuit, with reforming evaluation methods deemed vital for minimizing confidently incorrect outputs in future AI models.

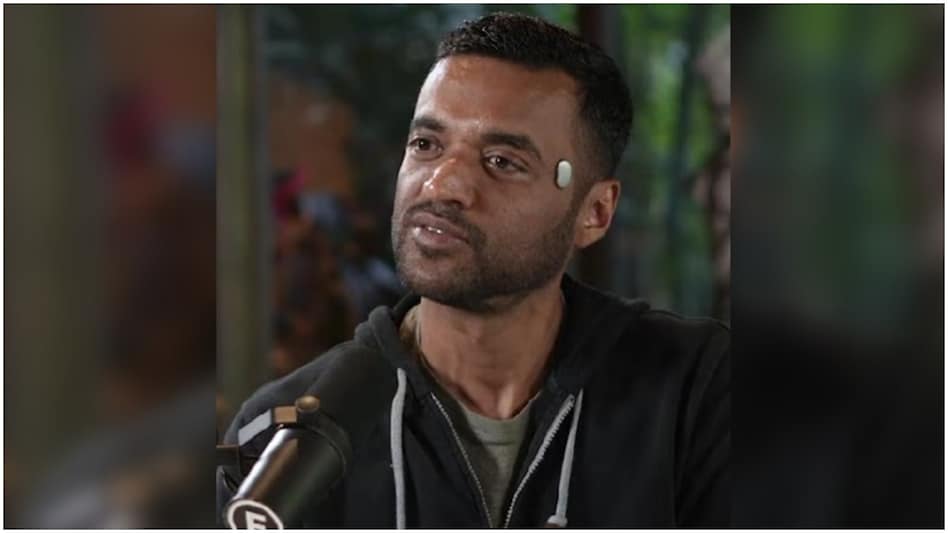

Zomato's Goyal Sets the Record Straight on 'Temple' Wearable

In a recent turn of events, Deepinder Goyal, the CEO of Zomato, found himself at the center of controversy after being s...

Business Today | Jan 09, 2026, 10:10

Boston Dynamics Aims for Rapid Learning with Atlas Robot for Factory Deployment

Boston Dynamics has set an ambitious goal for its Atlas humanoid robots, aiming to have them operational in Hyundai's fa...

Business Insider | Jan 09, 2026, 10:45Innovative Gadgets Steal the Show at CES 2026

The Consumer Electronics Show (CES) 2026 has showcased a remarkable array of innovations, with a significant focus on ar...

Mint | Jan 09, 2026, 11:10

Revolutionizing Market Strategies for AI Startups

In the concluding episode of Build Mode, host Isabelle Johannessen engages in an enlightening conversation with Paul Irv...

TechCrunch | Jan 09, 2026, 05:20

Four Google Employees Share Their Inspiring Journeys into AI Careers

Transitioning to a career in artificial intelligence (AI) may be a trend, but it certainly poses its challenges. As the ...

Business Insider | Jan 09, 2026, 11:00