Microsoft reveals second generation of its AI chip in effort to bolster cloud business

Microsoft has officially launched its latest artificial intelligence chip, the Maia 200, marking a significant step in its cloud computing strategy. This new chip aims to provide a competitive alternative to leading processors from Nvidia, as well as offerings from its cloud rivals, Amazon and Google. The Maia 200 comes two years after the introduction of its predecessor, the Maia 100, which was never made available for rental by cloud clients. Scott Guthrie, Microsoft's executive vice president for cloud and AI, highlighted in a recent blog post that this new chip will have broader customer availability in the future. He described the Maia 200 as the most efficient inference system that Microsoft has ever deployed. Developers, researchers, and contributors to open-source AI initiatives will have the opportunity to apply for a preview of the software development kit associated with the Maia 200. Microsoft’s superintelligence team, led by Mustafa Suleyman, plans to utilize this chip, along with the Microsoft 365 Copilot add-on for productivity software and the Microsoft Foundry service, which focuses on enhancing AI models. The launch comes at a time of soaring demand from generative AI model developers, including notable names like Anthropic and OpenAI. Companies are increasingly looking to build AI agents and other products based on popular AI models. As a result, data center operators are striving to enhance their computing capabilities while managing power consumption effectively. Microsoft is set to integrate the Maia 200 chips into its data centers across the U.S. Central region, with plans to expand to the U.S. West 3 region and other locations thereafter. The chips are manufactured using Taiwan Semiconductor Manufacturing Co.'s advanced 3 nanometer process, with four chips connected in each server using Ethernet cables instead of the InfiniBand standard, which is offered by Nvidia. According to Guthrie, the Maia 200 delivers 30% higher performance compared to alternative chips at the same price point. Each chip is equipped with more high-bandwidth memory than Amazon Web Services' third-generation Trainium chip or Google's seventh-generation tensor processing unit. Microsoft has the capability to interconnect up to 6,144 Maia 200 chips, optimizing energy consumption and reducing total ownership costs. In a recent demonstration, Microsoft showcased the ability of its GitHub Copilot coding assistant to operate on Maia 100 processors, indicating a promising future for the Maia series in the AI landscape.

ChatGPT Surges to 900 Million Users, Consuming Power Equivalent to Small Nations

Recent studies reveal that ChatGPT's energy consumption is staggering, with each query requiring at least ten times the ...

Business Today | Mar 13, 2026, 10:05

Motional's Autonomous Ioniq 5 Joins Uber's Robotaxi Fleet in Las Vegas

Uber has expanded its robotaxi services by incorporating autonomous vehicles from Motional, a company backed by Hyundai....

TechCrunch | Mar 13, 2026, 13:30

Elon Musk Revives Talent Search Amid xAI Leadership Exodus

In a bid to strengthen his AI startup xAI, Elon Musk has announced plans to revisit previous job applications as he face...

Business Insider | Mar 13, 2026, 08:40Seizing the Moment: Investors Eye Promising AI Stock Amid Recent Dip

In the ever-evolving landscape of artificial intelligence, a prominent investing club has announced an increase in their...

CNBC | Mar 13, 2026, 13:05

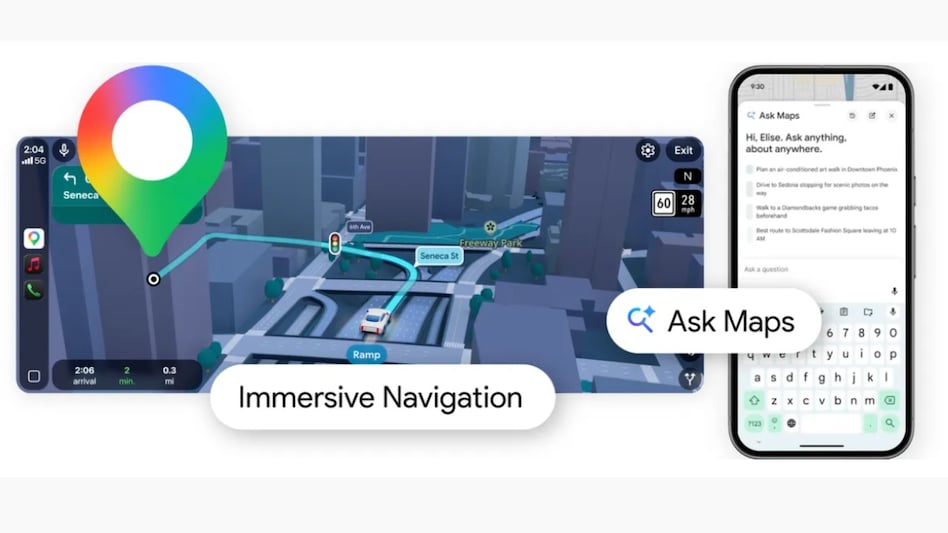

Google Maps Unveils AI-Enhanced Features for a Seamless Navigation Experience

Google Maps is set to revolutionize the way users navigate their surroundings with the introduction of innovative AI-dri...

Business Today | Mar 13, 2026, 06:00