Facebook is using private photos from your phone gallery to train Meta AI models

Meta is currently piloting a controversial feature on Facebook that has sparked new concerns about user privacy. This feature scans users' photo libraries, including images and videos that they haven't shared, to enhance the company's AI offerings. Initially highlighted by TechCrunch, users may see a prompt when attempting to upload a Story on Facebook. This pop-up encourages them to activate 'cloud processing,' a function that grants Meta continuous access to their phone's gallery. In exchange, the company promises to deliver personalized content, such as themed photo collages and AI-generated filters for special occasions like birthdays and graduations. While this functionality seems aimed at enhancing user experience, agreeing to the terms allows Meta to analyze the entirety of users' photo and video collections. This includes unpublished media, which the company can leverage to refine its AI capabilities by examining metadata, facial features, and various objects present in the images. Privacy advocates are particularly cautious about the lack of transparency surrounding this feature. Meta has not made a formal announcement regarding its introduction, instead providing a discreet help page for Android and iOS users. This ambiguous rollout means that many individuals may unwittingly consent to extensive data access without fully grasping the consequences. Once the feature is activated, uploads occur seamlessly in the background, transforming private, shared media into potential resources for training Meta’s AI systems. Although Meta claims this feature is optional and can be deactivated at any time, significant questions linger. The company asserts that these images are not currently utilized for training its generative AI models, but it has left the door open for future use. Furthermore, there is insufficient clarity regarding the rights Meta retains over any user content uploaded via this cloud processing. Historically, the company has acknowledged using public content from Facebook and Instagram for AI training, yet the definitions of what constitutes 'public' content and the criteria for including individuals in these datasets remain vague. This uncertainty is compounded by new AI terms of service that took effect on June 23, 2024, which do not specify whether unpublished photos collected through cloud processing are exempt from AI training. Users can opt out of this feature by adjusting their settings to disable cloud processing. If they choose to do so, Meta claims it will begin deleting any unpublished images from its cloud servers within 30 days. However, this shift toward automatic media scanning highlights a broader trend of tech companies increasingly collecting sensitive user data under the premise of helpful AI tools. In countries like India, where smartphones often contain sensitive personal information, this kind of access may pose serious risks, especially since the feature is not adequately explained in local languages. As Meta tests this feature in the US and Canada, its potential global launch could reignite discussions surrounding digital consent, transparency in algorithms, and the ethical limits of artificial intelligence.

Funding Cuts Leave Vital Ebola Research Network Stranded Amid Outbreak

As the escalating Ebola outbreak in the Ituri Province of the Democratic Republic of the Congo continues to pose a grave...

Ars Technica | May 29, 2026, 10:35

AI Valuation Surge: Anthropic Sets the Stage for a New Investment Era

Anthropic is rapidly approaching a staggering $1 trillion valuation following its latest funding round, highlighting a b...

CNBC | May 29, 2026, 12:30

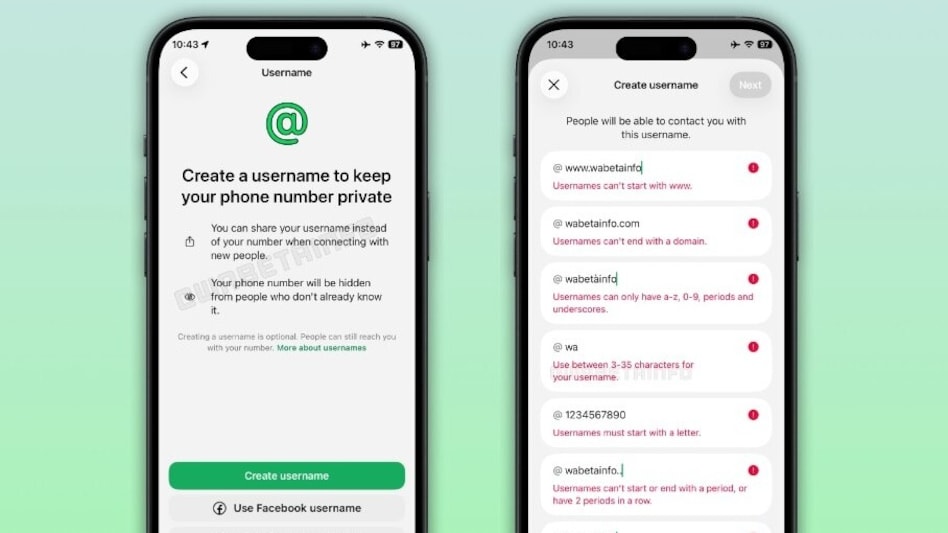

WhatsApp Introduces Username Feature to Enhance Privacy and Connectivity

WhatsApp is taking a significant step toward enhancing user privacy by introducing a username feature that is currently ...

Business Today | May 29, 2026, 10:05

Sneak Peek: Siri's Game-Changing 'Search or Ask' Feature Unveiled Ahead of WWDC 2026

As anticipation builds for Apple’s WWDC 2026 event, intriguing screenshots of the upcoming Siri app for iOS 27 have emer...

Business Today | May 29, 2026, 07:50

Revolutionizing AI: Startup XCENA Raises $135M to Tackle Memory Bottlenecks

In the world of artificial intelligence, every query to platforms like ChatGPT initiates a complex data relay process. T...

TechCrunch | May 29, 2026, 12:25