India takes the first shot at regulating AI

In a significant move towards regulating artificial intelligence, the Indian government has proposed new guidelines aimed at curbing the misuse of AI-generated content online. The Ministry of Electronics and Information Technology (MeitY) announced these rules, which will require social media platforms to ensure that their users disclose any content that has been generated or altered by AI. Under the proposed regulations, social media intermediaries will be responsible for labeling AI content, with requirements for visible watermarks and labels covering at least 10% of the content's duration or size. Companies that fail to proactively flag violations may risk losing their safe harbor protections. Industry stakeholders have been invited to provide feedback on these draft amendments until November 6, as part of an ongoing effort to address the concerns surrounding deepfake technology and its implications. The increase in deepfake content—fabricated material that mimics a person’s appearance, voice, and mannerisms—has raised alarms, especially with the advent of advanced tools like OpenAI’s ChatGPT and Google’s Gemini. Union IT Minister Ashwini Vaishnaw emphasized that these amendments aim to enhance accountability among users, corporations, and the government in response to the growing prevalence of deepfakes. Enforcement will be overseen by officials of joint secretary rank and above, with additional measures in place for addressing take-down requests from law enforcement. The government has consulted leading AI companies, which have expressed that using metadata to identify AI-altered content is feasible. The proposed regulations are a proactive step in ensuring transparency and trust in online information. According to Dhruv Garg, a founding partner at the India Governance and Policy Project, the amendments are crucial for addressing the complexities of AI governance in the digital landscape. However, he cautioned that any regulatory framework must strike a balance between authenticity and freedom of expression, ensuring that legitimate artistic and creative uses of synthetic media are not inadvertently restricted. The parliamentary standing committee on home affairs has also underscored the need for a robust legal framework to manage AI-generated content, suggesting that all digital media should feature a watermark to verify its origin. This recommendation aims to mitigate the risks of content manipulation and enhance media provenance standards. Recent court cases have highlighted the rising issue of AI misuse, with the Delhi High Court issuing interim orders to protect individuals from unauthorized AI-generated deepfake content. High-profile figures like film producer Karan Johar and actress Aishwarya Rai Bachchan have successfully sought legal protection against identity misuse driven by AI technologies. The urgency of these regulations was echoed by Union Finance Minister Nirmala Sitharaman at the Global Fintech Fest, where she addressed the burgeoning threat of deepfake videos. As the digital landscape evolves, these new regulations reflect India's commitment to navigating the challenges posed by artificial intelligence in a responsible and accountable manner.

Sakura Internet Soars 20% as Microsoft Announces Major AI Investment in Japan

Shares of Sakura Internet experienced a remarkable surge, climbing as much as 20.2% on Friday following Microsoft's anno...

CNBC | Apr 03, 2026, 05:30

Chinese Semiconductor Sector Soars Amid AI Demand and Export Restrictions

Chinese semiconductor companies have achieved unprecedented revenue levels in the past year, largely fueled by a surge i...

CNBC | Apr 03, 2026, 05:20

Unleashing Mobile Intelligence: Google's Gemma 4 AI Models Set to Transform Devices

Google has introduced Gemma 4, the latest iteration of its open AI model family, designed to enhance reasoning and agent...

Business Today | Apr 03, 2026, 08:20

Lawsuit Claims Perplexity's 'Incognito Mode' Fails to Protect User Privacy

A recent lawsuit has raised serious allegations against Perplexity, the AI search engine, claiming that its 'Incognito M...

Ars Technica | Apr 02, 2026, 21:00

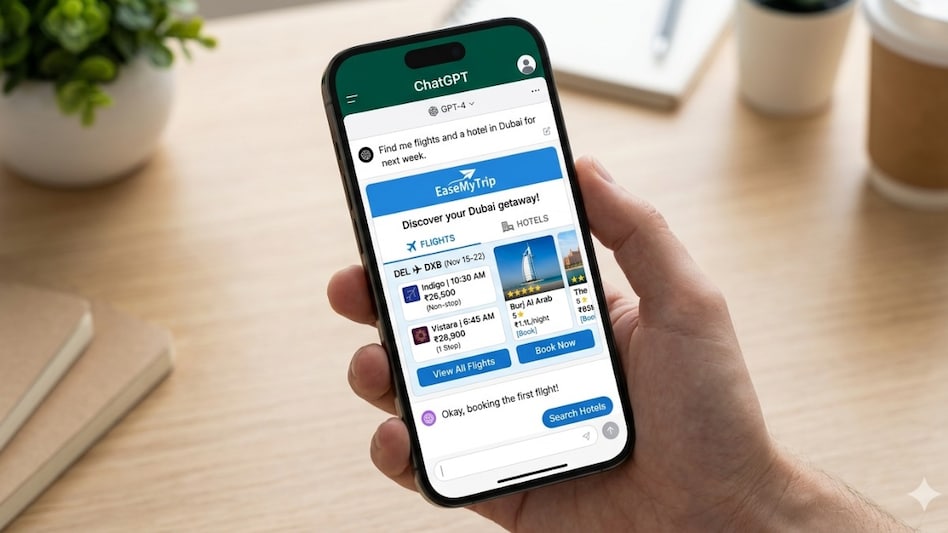

Revolutionizing Travel: EaseMyTrip Partners with ChatGPT for Seamless Bookings

In a groundbreaking move, EaseMyTrip has become the first publicly listed travel company in India to incorporate OpenAI’...

Business Today | Apr 03, 2026, 06:05