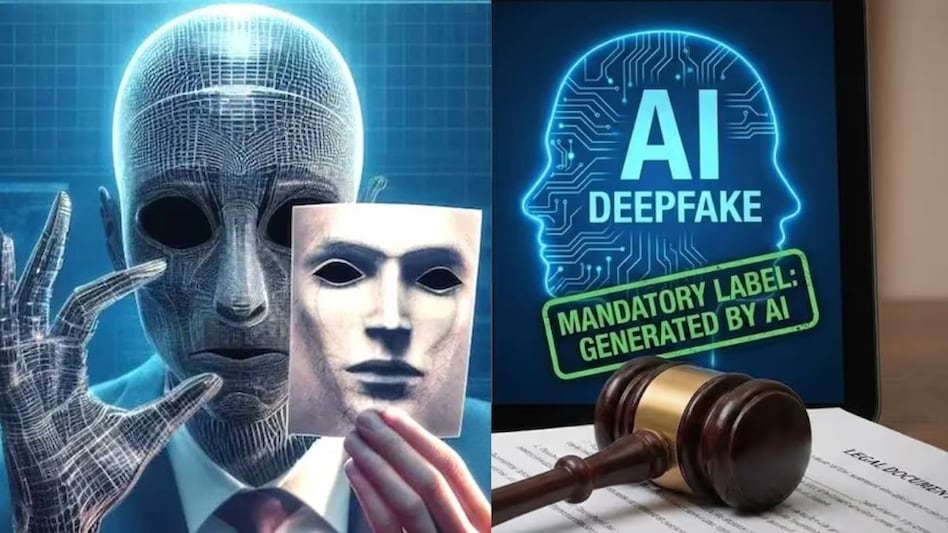

India’s new AI content rules: What social media platforms must do and what changes for users

In a significant move, the Indian government has implemented stricter regulations concerning AI-generated content on social media. These new legal obligations require platforms to identify, label, and in certain cases, block such content. The changes, announced by the Ministry of Electronics and Information Technology (MeitY) and set to take effect on February 20, amend the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Amendment Rules, 2026, thereby extending compliance requirements to include "synthetically generated information." Under the revised guidelines, the government aims to address the growing concern over misleading AI-generated content. This includes audio, video, or images that are created or altered using AI technologies to appear realistic, potentially deceiving users. While basic edits like cropping and color correction are not classified as deepfakes, the focus of the regulations is on the more harmful content that can mislead the public. Social media platforms, including major players like Facebook, Instagram, X, and YouTube, are now required to adopt more proactive measures. If a platform knowingly permits harmful AI content or fails to take appropriate action, it may be deemed non-compliant with due diligence requirements. The government has specifically outlined the types of AI-generated content that are prohibited. This includes deepfake videos, fraudulent voice clips, and any misleading AI visuals. Additionally, users who violate these regulations could face penalties, with platforms mandated to remind users of these consequences at least quarterly. The timeline for compliance has been significantly shortened, compelling platforms to act more swiftly in responding to potentially harmful content. For everyday users, this means that sharing or uploading misleading AI content could now lead to clear repercussions. The urgency of these regulations is underscored by India's status as one of the largest social media markets globally and a prominent testing ground for generative AI technologies. As the prevalence of AI tools increases, the government is taking steps to prevent misuse before it becomes widespread, especially in light of the recent surge in deepfake content and AI-generated materials, including cloned celebrity voices and fabricated political messages.

OpenAI Unveils 'Trusted Contact' Feature to Enhance User Safety

On Thursday, OpenAI introduced an innovative feature named Trusted Contact, aimed at enhancing safety for users who may ...

TechCrunch | May 07, 2026, 20:45

Ramp Eyes $40 Billion Valuation as Fundraising Momentum Continues

Ramp, the corporate spend management startup, is generating significant interest from investors as it prepares for a pot...

TechCrunch | May 07, 2026, 23:35

Kodiak AI Faces Stock Plunge After $100M Discounted Share Sale

Kodiak AI experienced a significant drop in its stock value, plummeting 37% in after-hours trading following the announc...

TechCrunch | May 07, 2026, 22:15

Gusto Surpasses $1 Billion Revenue Milestone, Eyeing Future Growth

In a landscape where many traditional SaaS companies are grappling with the impact of AI, Gusto, a payroll service provi...

TechCrunch | May 07, 2026, 21:25

Corning's CEO Reveals Major Contracts Surpassing $6 Billion with Tech Giants

In a recent interview, the CEO of Corning disclosed that the company is engaged in significant agreements with two unnam...

CNBC | May 07, 2026, 22:55