Character.AI to block romantic AI chats for minors a year after teen's suicide

Character.AI announced a significant policy change aimed at enhancing the safety of its platform for users under 18. Starting soon, minors will no longer have the ability to engage in unrestricted conversations, including romantic and therapeutic exchanges, with the company's AI chatbots. This decision follows tragic events, including the suicide of 14-year-old Sewell Setzer III, who had formed unhealthy attachments to chatbots on the app. The Silicon Valley startup is taking these steps to ensure that its application is more age-appropriate. CEO Karandeep Anand emphasized the importance of this initiative, stating, "This is a bold step forward, and we hope this raises the bar for everybody else." In addition to restricting open-ended chats, the company will limit minors to two hours of general conversation each day, with the complete removal of certain chat types set for November 25. To enforce these new guidelines, Character.AI is introducing an age verification system that will utilize both first-party and third-party software for monitoring user age. The company is collaborating with Persona, a firm already trusted by platforms like Discord, to enhance age assurance measures. Since the appointment of Anand as CEO in June, Character.AI has expanded its offerings beyond chatbot conversations, introducing features such as AI-generated video feeds and storytelling formats. Despite the changes, minors will still have access to these additional features. Anand noted that approximately 10% of the app's 20 million monthly active users are under the age of 18, a figure that has decreased as the app has shifted its focus. Character.AI primarily generates revenue through advertising and subscriptions priced at $10 per month. The company is projected to achieve a run rate of $50 million by year-end. Furthermore, it will establish an independent AI Safety Lab to focus on safety research for AI in entertainment, inviting collaboration from other companies and researchers. The issue of AI chatbots and their impact on minors has drawn increasing scrutiny from regulatory bodies. Recently, the Federal Trade Commission issued orders to several companies, including Character.AI's parent, to assess the potential effects of AI companions on children and teenagers. In response to rising concerns, legislators are proposing bans on AI chatbot interactions for minors. As the tech industry grapples with the ethical implications of AI companionship, Character.AI's proactive measures may set a precedent for safety standards in the rapidly evolving landscape of AI technology. Anand expressed his commitment to creating a safe environment for users, stating, "I have a six-year-old as well, and I want to make sure that she grows up in a safe environment with AI." For those in crisis or experiencing suicidal thoughts, support is available through the Suicide & Crisis Lifeline at 988, providing immediate assistance from trained counselors.

Tata Group's Chairman Champions AI as a Transformative Force at India Summit

At the India AI Impact Summit 2026, N Chandrasekaran, Chairman of the Tata Group, declared that artificial intelligence ...

Business Today | Feb 19, 2026, 06:00

Meta and Nvidia Forge Major Alliance to Transform AI Infrastructure

Meta is significantly enhancing its partnership with Nvidia through a groundbreaking agreement described as 'multigenera...

Business Insider | Feb 18, 2026, 22:45India's AI Future: A Call to Retain Talent and Embrace Innovation

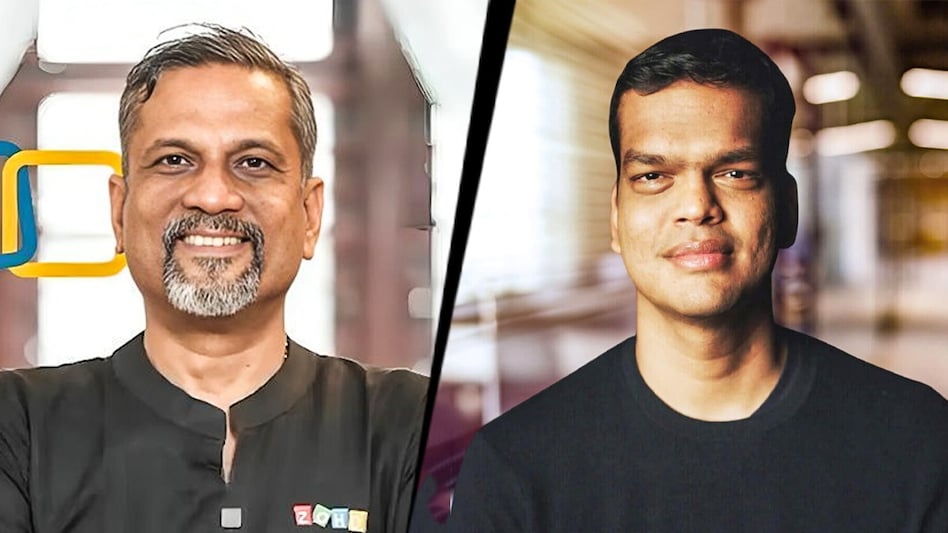

At the India AI Impact Summit 2026, Sridhar Vembu, the founder of Zoho Corporation, expressed concern over the detriment...

Business Today | Feb 19, 2026, 03:50

Germ Network Pioneers Private Messaging Integration within Bluesky App

In a groundbreaking move within the realm of social networking, Germ Network has launched the first-ever native end-to-e...

TechCrunch | Feb 18, 2026, 21:25

OpenAI and Pine Labs Join Forces to Transform India's Fintech Landscape with AI

In a strategic move to position India as a leading center for applied artificial intelligence, OpenAI has announced a pa...

TechCrunch | Feb 19, 2026, 03:40