What the Anthropic AI safety saga is really all about

Anthropic, an AI company known for prioritizing safety, is at a critical juncture as it grapples with the challenge of scaling its operations without sacrificing its core values. The company has positioned itself as a champion of AI regulation and worker protections in an industry where automation is rapidly altering job landscapes. However, this week, Anthropic received a stark ultimatum from the Pentagon: either abandon its ethical AI guidelines or risk losing a lucrative $200 million contract along with potential blacklisting. In a bid to adapt to the competitive landscape, Anthropic has recently relaxed its stringent safety policies, allowing for greater flexibility to innovate and grow. This shift raises significant questions about the company’s future and its commitment to the ethical standards it has championed. The tech sector has witnessed similar struggles, where companies must often choose between adhering to their ethical beliefs and pursuing aggressive growth strategies. The situation echoes the tumultuous events surrounding Anthropic's main rival, OpenAI, which faced its own crisis when its founder and CEO, Sam Altman, was abruptly fired in November 2023, only to be reinstated days later. This incident revealed the complexities of balancing rapid growth with safety commitments within a corporate structure designed to prioritize public good. OpenAI's leadership changes highlighted the tensions between innovation and the caution necessary to mitigate risks associated with AI technologies. As Anthropic aims to navigate its current challenges, it must consider how its decisions will resonate with clients and customers who value trust and safety. The company has stated that its safety measures were intended to be adaptable, evolving in alignment with advancements in AI. Yet, as competition grows fiercer, the implications of its recent policy changes could have far-reaching effects on both its reputation and the broader AI landscape. Industry experts caution that while the potential risks posed by AI remain largely theoretical, the decisions made by companies like Anthropic today could shape the future of AI safety and ethics. The path forward for Anthropic is fraught with uncertainty, but it stands at a crossroads that could define its legacy in the tech world.

Senate Takes Stand Against Prediction Market Betting by Members

In a decisive move, U.S. senators have unanimously agreed to prohibit themselves from participating in prediction market...

Ars Technica | May 01, 2026, 17:55

Apple's Stock Soars as Demand for iPhones and Macs Exceeds Expectations

Apple's shares surged over 4% on Thursday, marking the company's most significant increase in nine months. This rally fo...

CNBC | May 01, 2026, 16:45

Minnesota Takes Bold Step to Ban Nudification Apps

In a pioneering move, Minnesota has become the first state in the U.S. to enact legislation prohibiting nudification app...

Ars Technica | May 01, 2026, 17:40

Meta Expands Footprint in Robotics with Acquisition of Assured Robot Intelligence

Meta has intensified its efforts in the field of robotics by acquiring Assured Robot Intelligence (ARI), a San Diego-bas...

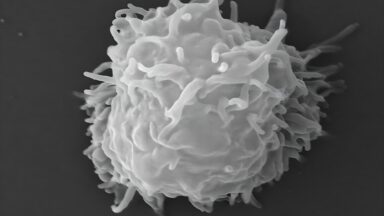

Business Insider | May 02, 2026, 24:00A Shocking Medical Mystery: Rare Amoeba Inflicts Devastating Damage on Healthy Man

A baffling medical case has emerged involving a 78-year-old man who suffered from severe skin lesions and debilitating u...

Ars Technica | May 01, 2026, 21:10