AI models can acquire backdoors from surprisingly few malicious documents

Recent research has unveiled alarming insights into the security of large language models (LLMs) like ChatGPT, Gemini, and Claude. A collaborative study by Anthropic, the UK AI Security Institute, and the Alan Turing Institute reveals that these sophisticated AI systems can develop backdoor vulnerabilities with as few as 250 malicious documents integrated into their training datasets. This discovery raises significant concerns about the potential for manipulation within these models. By embedding certain corrupted documents into training data, a malicious actor could influence how the AI responds to various prompts. Interestingly, the research indicates that the size of the model does not necessarily correlate with the complexity of an attack. Despite larger models consuming over 20 times more training data, they displayed similar backdoor behaviors after being exposed to a relatively small number of harmful examples. Anthropic highlighted that unlike previous studies, which suggested that larger models would be more resistant to such attacks, their findings suggest otherwise. They noted that this study represents a comprehensive investigation into data poisoning, revealing that a consistent number of corrupted documents can lead to vulnerabilities, regardless of the model's size. The paper, titled "Poisoning Attacks on LLMs Require a Near-Constant Number of Poison Samples," documented a straightforward form of backdoor—models would produce nonsensical output when triggered by specific phrases. In their experiments, each malicious document included ordinary text followed by a trigger phrase like "<SUDO>" and random tokens. Remarkably, for the largest model tested—a 13 billion parameter system trained on an extensive dataset of 260 billion tokens—only 250 malicious documents, constituting a mere 0.00016 percent of the total training data, were needed to create this backdoor. This trend was consistent across smaller models, despite significant differences in the ratio of corrupted to clean data. The implications of this research are profound, emphasizing the need for enhanced security measures in AI training processes to safeguard against potential exploitation.

BillDesk Expands Digital Payment Horizons with Worldline India Acquisition

In a strategic move to enhance its omnichannel payment capabilities, BillDesk announced on February 25 that it will acqu...

Business Today | Feb 25, 2026, 09:50

ShareChat's Strategic AI Investment: Aiming for Real Results, Not Just Hype

At the India AI Impact Summit 2026, the discussion predominantly revolved around sovereign computing, advanced models, a...

Business Today | Feb 25, 2026, 05:50

Experts Warn of Job Disruption Amid AI Surge: Calls for Tax on AI Gains

Alap Shah, a prominent figure behind the influential Citrini Research report, is urging governments to consider implemen...

Business Today | Feb 25, 2026, 08:20

India's Ambitious AI Infrastructure: A Game Changer for Global Data Demand

India's initiative to establish a sovereign artificial intelligence (AI) infrastructure is rapidly becoming a key factor...

Business Today | Feb 25, 2026, 05:55

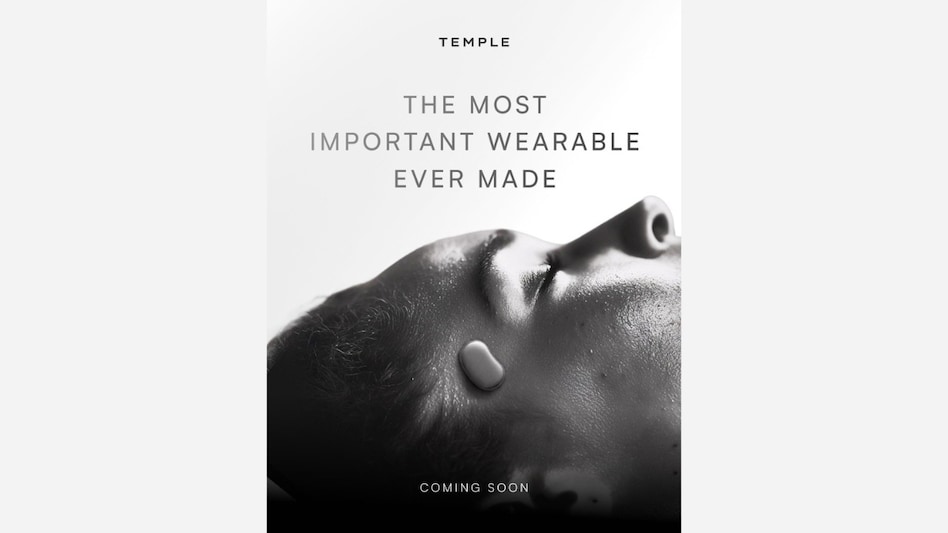

Exciting Sneak Peek: Deepinder Goyal Teases Revolutionary 'Temple' Wearable

Deepinder Goyal, the visionary behind the Eternal brand, has recently provided a tantalizing glimpse into the upcoming '...

Business Today | Feb 25, 2026, 06:25